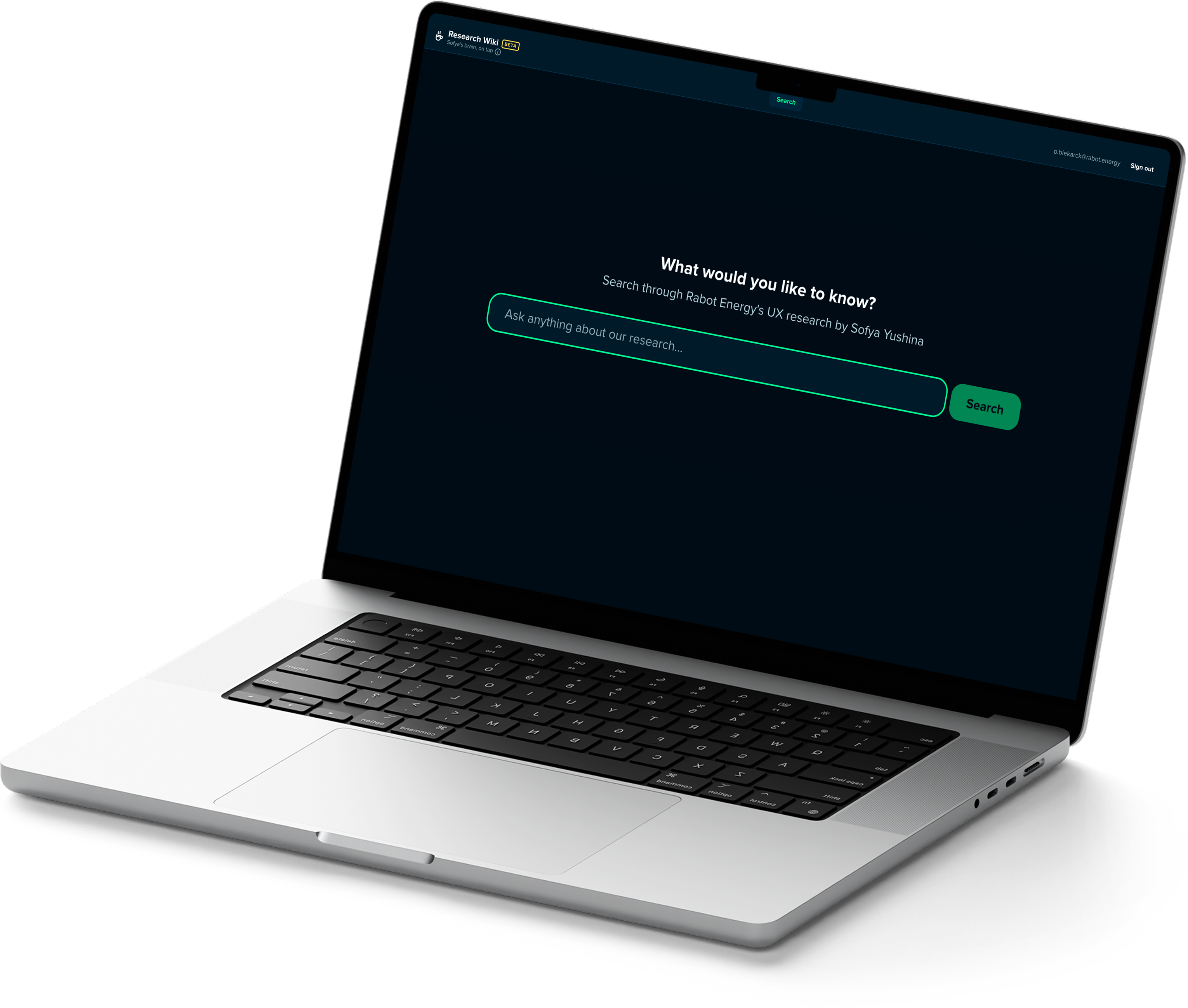

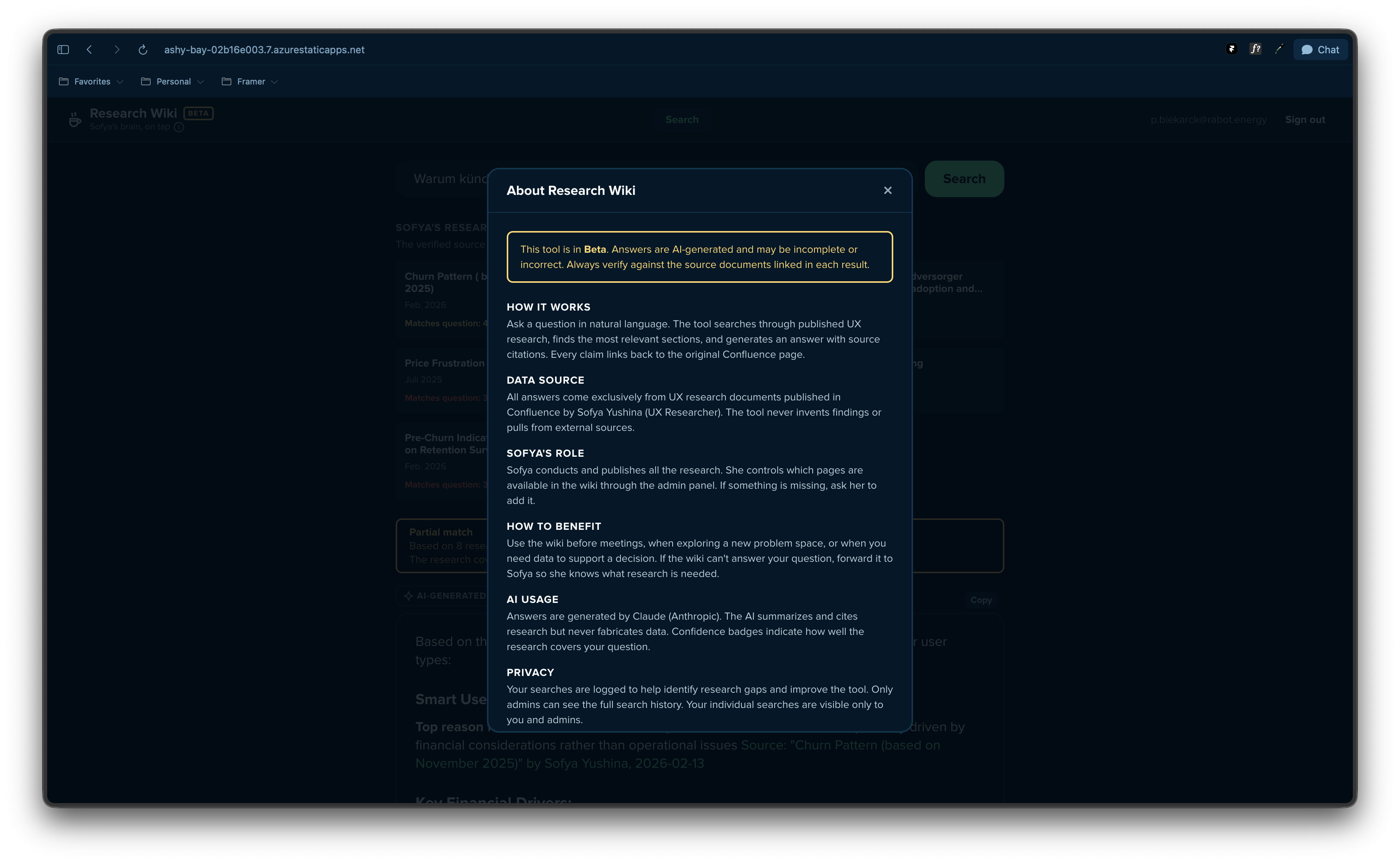

The Research Wiki is in pilot with Sofya Yushina. She is the quality gate: she controls which Confluence pages are live in the system and provides feedback on answer quality as it runs.

There was verbal interest from colleagues when the tool was previewed internally. No adoption data is available yet. Broader rollout is pending the outcome of the pilot.

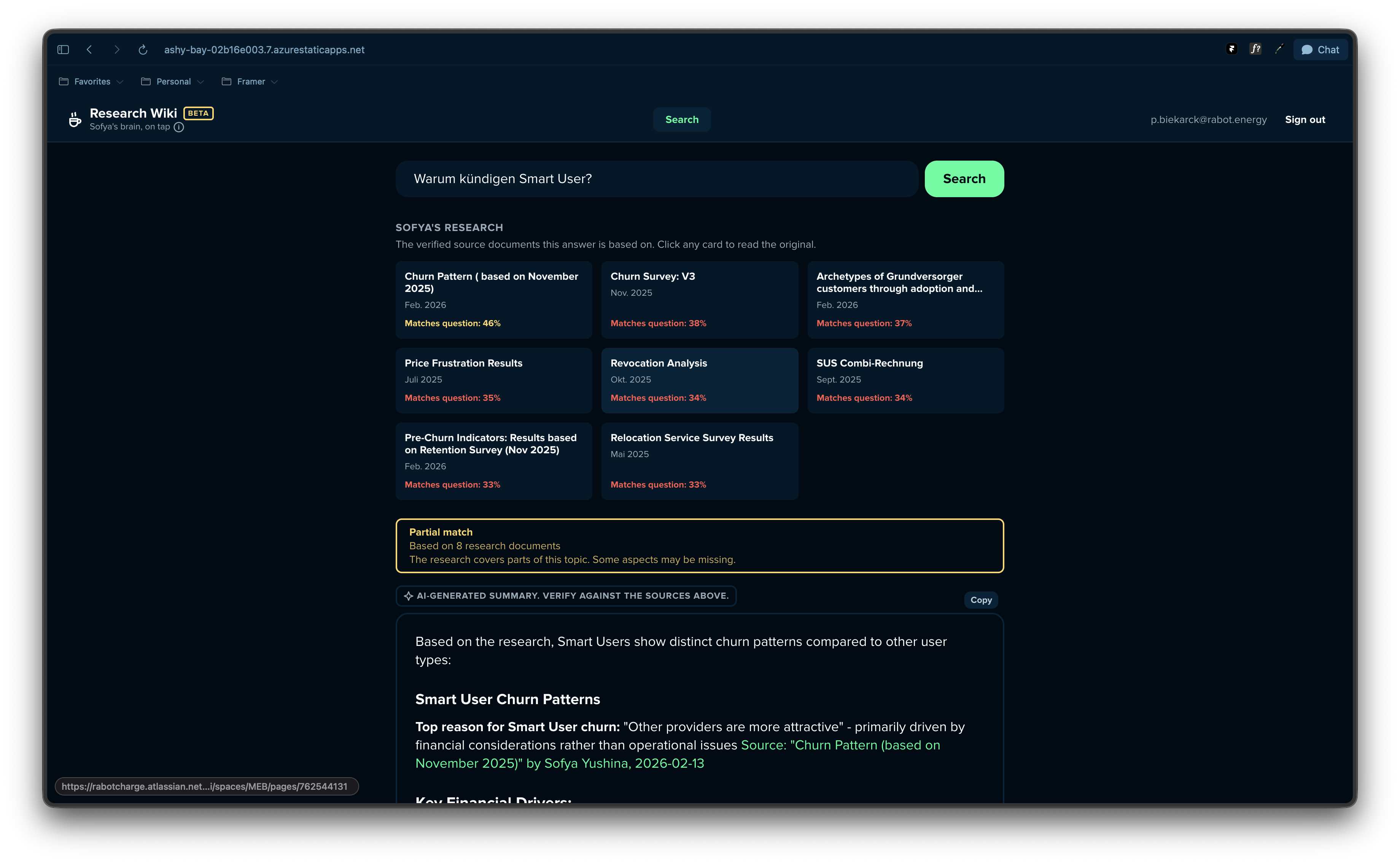

What would be worth measuring: number of searches per week, confidence score distribution (a proxy for research coverage), questions forwarded to Sofya (a signal of where the research archive has gaps), and qualitative feedback on whether the tool changed how any product decisions were made. The last one is the only metric that actually matters.